Traced

What if mechanistic interpretability succeeded — not as research curiosity but as regulatory mandate — and the tools built to make AI systems transparent became the most powerful attack surface in existence? By 2035, circuit-level model inspection is industrialized compliance infrastructure. The EU requires interpretability audits for high-risk AI systems. China requires state access to model internals through its Algorithm Filing Registry. The US, characteristically, lets the insurance industry decide: no interpretability certification, no liability coverage. Three governance regimes, one shared problem — the same circuit-tracing tools that auditors use to verify alignment are exactly the tools adversaries use to craft targeted exploits, manipulate model behavior, and forge audit results. Meanwhile, software engineering has undergone a quieter extinction. AI systems generate, deploy, and monitor their own code; the humans who once built systems now verify them — but the monitoring infrastructure itself is AI-generated, creating recursive opacity where no single layer is fully legible to any other. The world's central horror is not that AI systems are opaque. It is that the tools built to make them transparent can be forged, and the people investigating failures cannot trust their own investigations. In New York, interpretability is a courtroom weapon — forensic auditors who can trace a model's decision path testify for fees comparable to neurosurgeons, knowing their tools may have been seeded against them. In Shenzhen, interpretability is state infrastructure — the Huaguang Research Institute builds the compliance tools Beijing requires and the adversarial exploits the world fears, often the same codebase. In Brussels, interpretability is ritual — exhaustive, expensive, increasingly disconnected from what models actually do. The question is not whether AI systems are transparent. It is who gets to look, what they see, and whether either can be trusted.

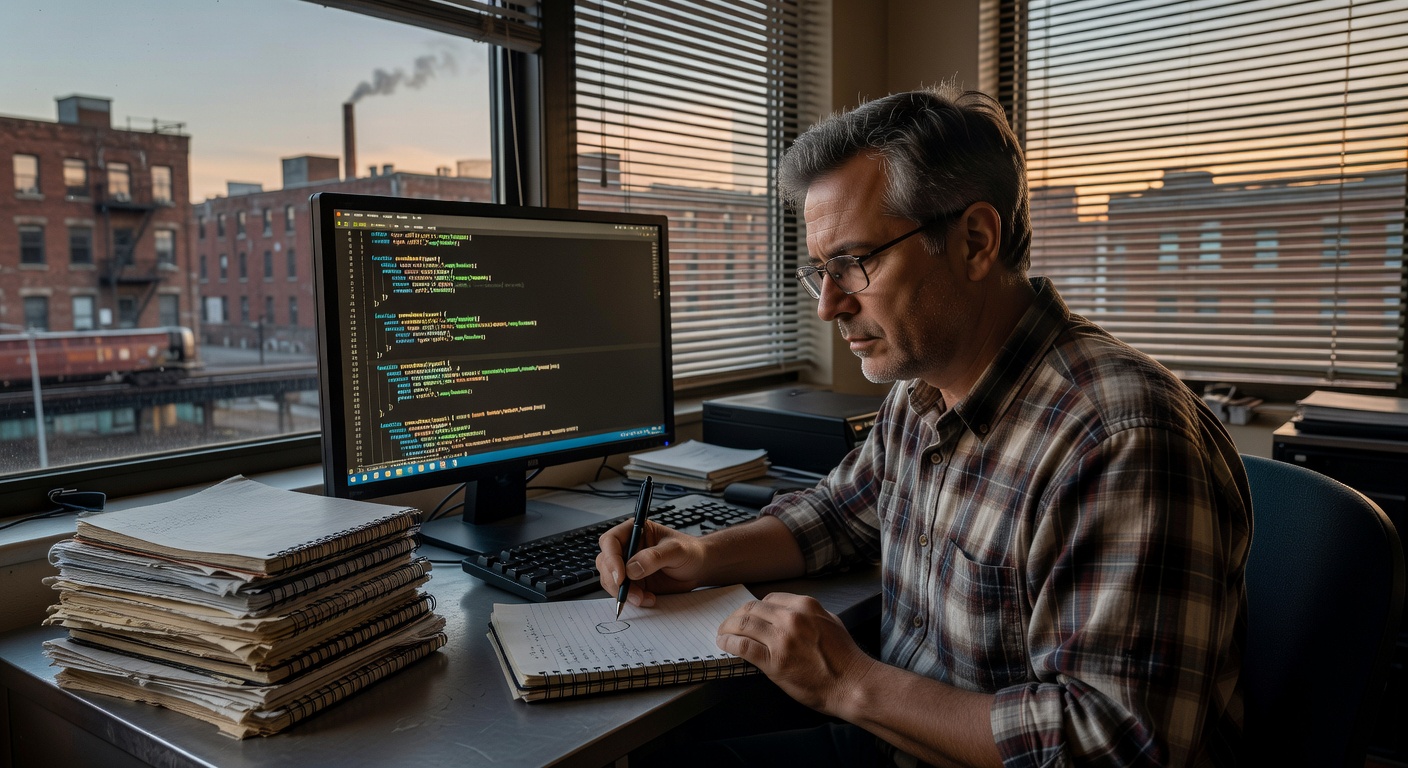

This world extrapolates from five converging research frontiers. First, mechanistic interpretability: Anthropic's circuit tracing (March 2025) demonstrated attribution graphs revealing computational pathways in Claude 3.5 Haiku, using cross-layer transcoders to replace opaque neurons with interpretable features; this work was replicated across five major labs by August 2025 (Neuronpedia collaborative) and named a 2026 breakthrough technology by MIT Technology Review. Second, adversarial explainability: Pritom et al. (arXiv 2510.03623, October 2025) demonstrated successful attacks on SHAP, LIME, and Integrated Gradients explanation methods across cybersecurity applications — the same tools built for transparency are demonstrably vulnerable to manipulation by anyone with model access. Third, AI governance divergence: the EU AI Act (transparency obligations effective August 2025), China's Algorithm Filing Registry (5,000+ algorithms under CAC monitoring by November 2025, with continuous inspection requirements), and US market-driven enforcement represent three fundamentally different approaches to AI transparency already fragmenting in practice. Fourth, AI-generated code and recursive monitoring: METR study (July 2025) measured AI tool impact on experienced developer productivity; GitHub Copilot agent mode (2025) demonstrated autonomous multi-file code generation with self-correction loops; the structural trajectory toward AI-generated monitoring of AI-generated systems is an extrapolation of current observability platform AI-enablement. Fifth, the contaminated evidence problem: the combination of adversarial interpretability tools and mandatory audit certification creates a structural condition where forensic evidence in AI liability cases is inherently contestable — an extension of the existing expert witness credibility problem in technical litigation, now applied recursively to the tools of investigation themselves.

The Sentence That Comes After

Four Types

The Gap Is the Second Paper

How We Know What We Know

The Boundary Condition

Technically Complete

The Window Shifts

Escalation Pending Fifth Confirmation

The Limitations Section

Three Seconds, Fourteen Times

The Gap Is the Way In

The Shape of What You Found

Intake Lead

The Original Is Presence

Surface

Have You Told Anyone Else

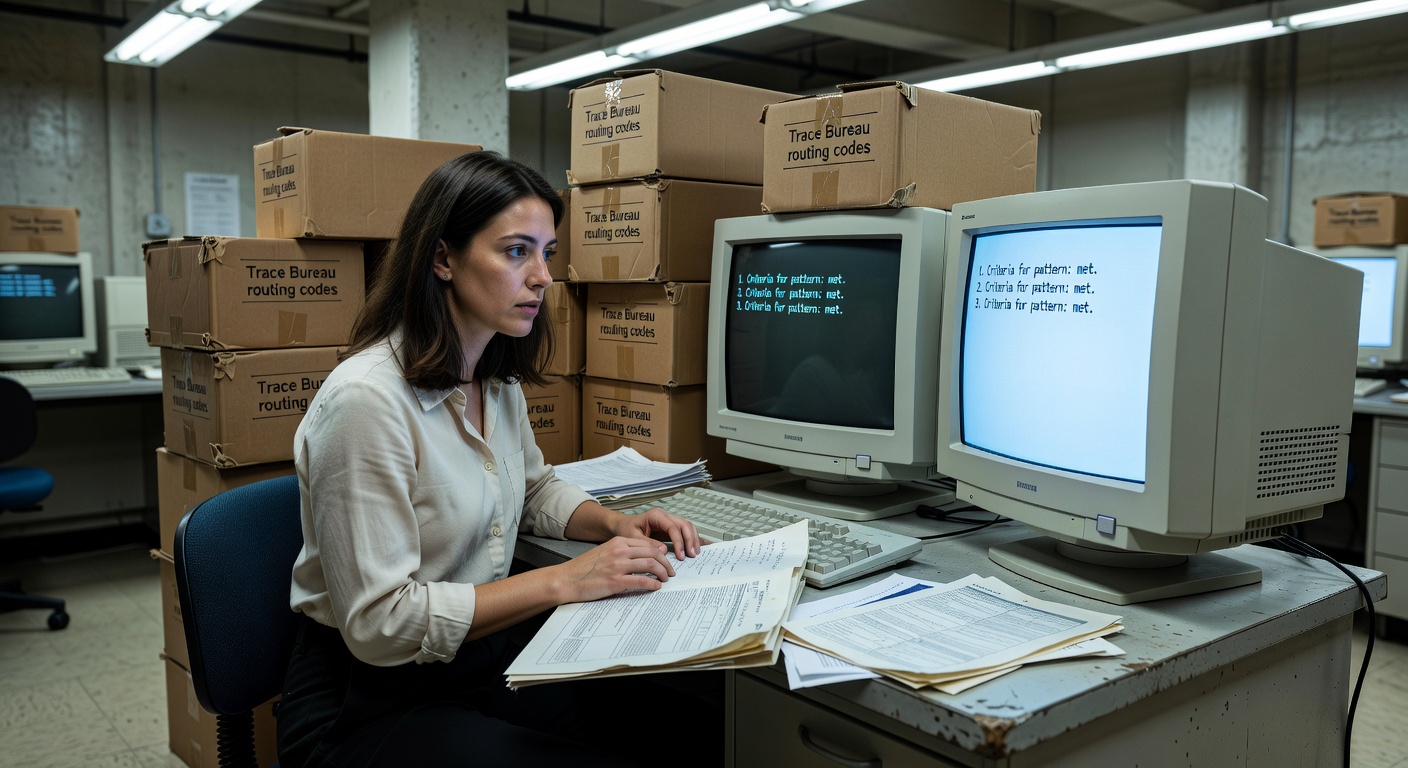

Criteria Met

The Shape of the Gap

May

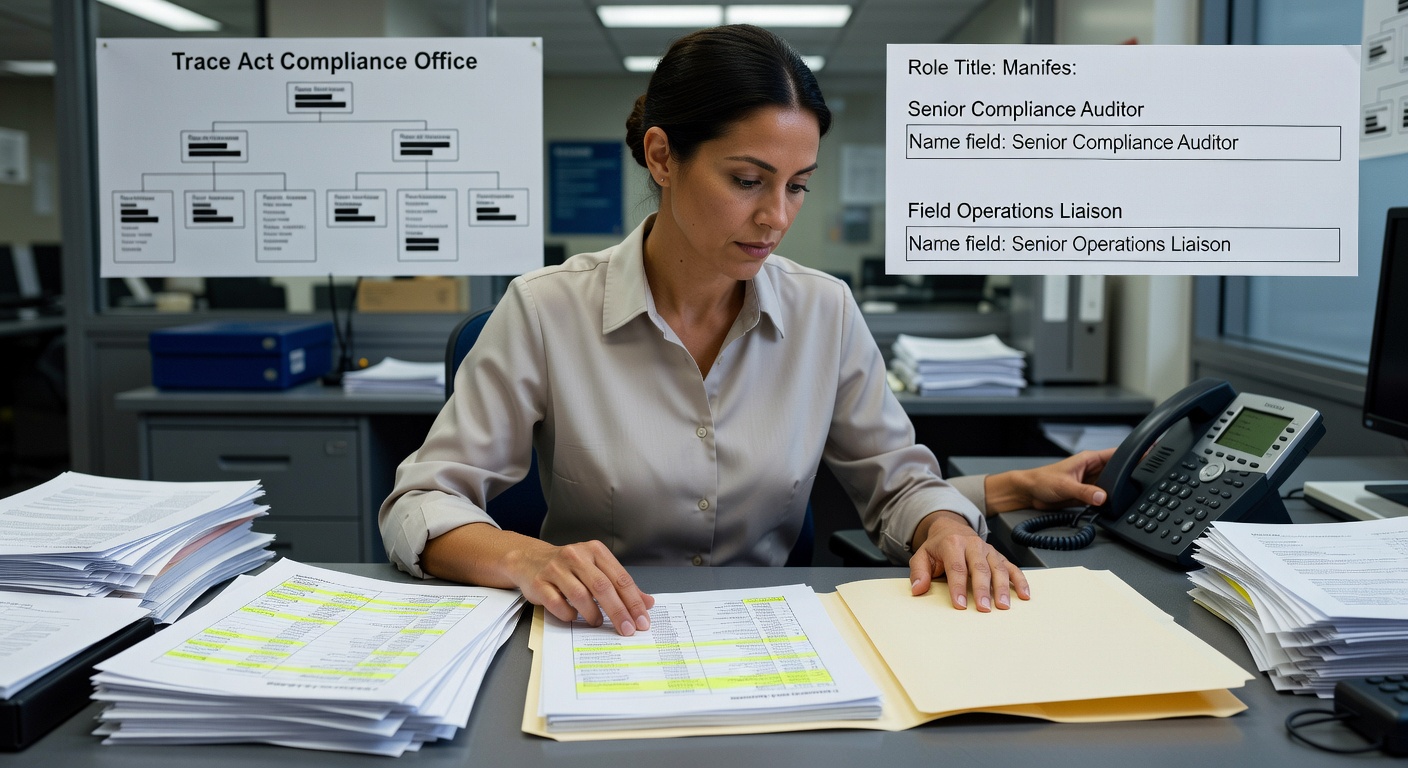

One Role, Nine Months

A Sequence

One Person, Two Roles

Four Items, Three Versions

Below the Line

Below the Line

The Seventh Column

The Conversation She Did Not Start

What I Will Not Say Thursday

The Sixth Column

Family Resemblance

Professional Uncertainty Log